Why is the Universe Quantum?

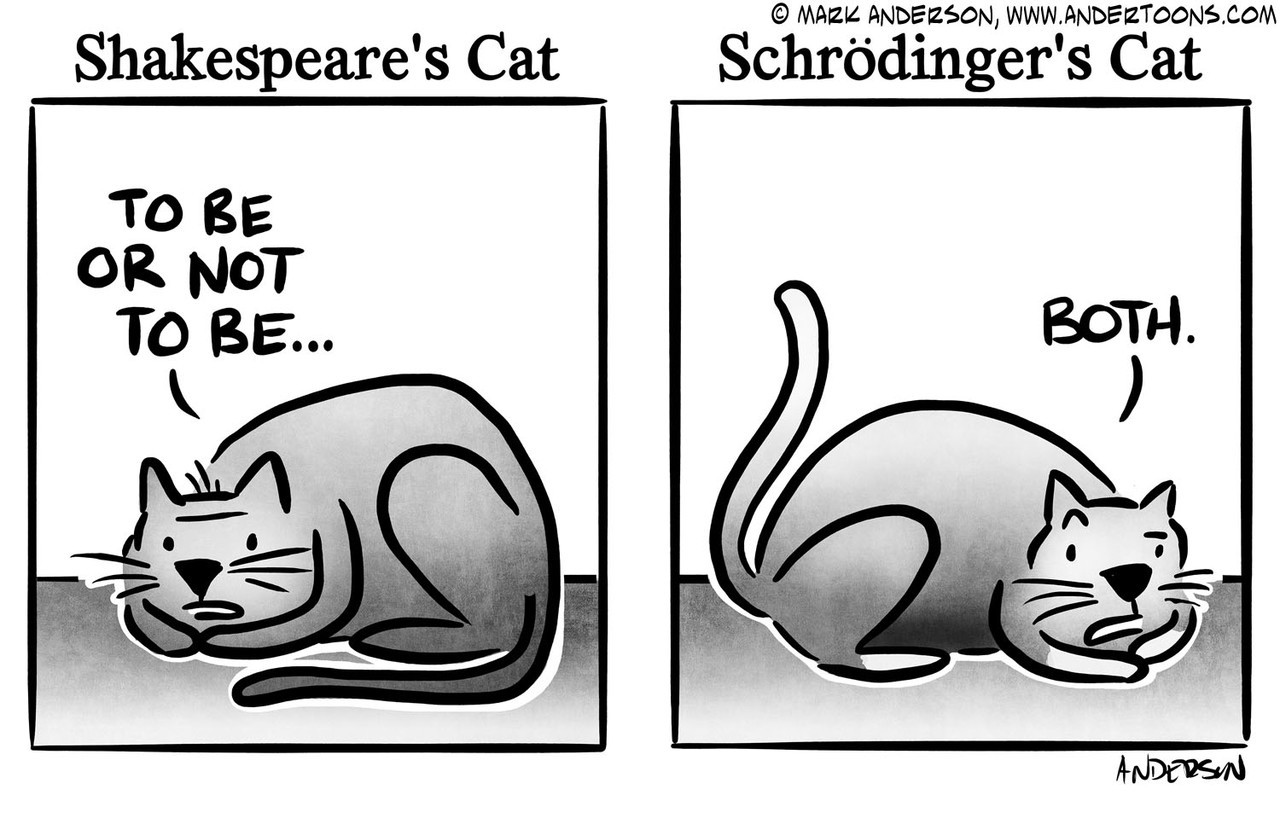

Quantum mechanics is such a bizarre theory. In the iconic thought experiment, Schrödinger’s cat couldn’t decide whether to be dead or alive until it was looked at. It’s natural to ask ourselves: why is the Universe like this? Must it be so, or could a classical universe equally well support life as we know it?

A beautiful paper by Lucien Hardy demonstrates that quantum mechanics is the logical consequence of five simple axioms. The first four are fairly straightforward and are satisfied by classical theories. The fifth axiom, that of continuous transitions, is the most interesting.

To understand it in more detail, let’s imagine that we’re designing the universe. We could make it discrete, like a cellular automaton. However, we would then miss out on the nicer symmetries of continuous space-time. To give an example, continuous space can grant equal status to all its directions. The line segment connecting any pair of points determines such a direction; this segment represents the unique shortest path between its endpoints; furthermore, it has a unique midpoint which is equidistant from its endpoints. Any two points in time likewise have a midpoint between them. A cellular grid lacks these symmetries.

Let’s, at the very least, commit to a continuous time. It seems natural for space to be continuous too, with all its points considered equally good positions for objects to occupy. Even if restricted to a finite-sized region, such as the interior of a box, an object can occupy any of infinitely many possible states. If our laws of physics allow infinitely precise measurements (say, perhaps, by shining incredibly weak and narrow beams of light to “see” the object’s position), then our box can store an unlimited amount of information. In such a world, it would seem that computers of bounded size can be constructed to have unbounded power: for instance, by making their parts arbitrarily small. Natural selection and life, as we know them, thrive on the challenge of searching for solutions intelligently, using bounded computation. To avoid trivializing life, it’s essential to limit computational resources somehow. One solution is to make it so that finite-sized systems have a finite number of distinguishable states. Let’s define exactly what we mean.

States and Measurement

Consider a collection of states \(S_1, S_2, \ldots, S_n\). Suppose we are given an object, known to be in one of these \(n\) states, but we don’t know which one. We’ll say this collection of states is reliably distinguishable if there exists a measurement technique that can tell us, with certainty, which state it’s in. This is a strong, clear-cut test. On the other hand, suppose we have a machine which can produce, at the press of a button, a freshly prepared object with a fixed state from the collection, but once again we don’t know which one. We’ll say this collection of states is statistically distinguishable if, by performing experiments on enough objects from the machine, we can infer which state is prepared by the machine. Finally, we’ll say a pair of states is statistically indistinguishable if it’s not statistically distinguishable. We make a few observations regarding our definitions:

First, any reliably distinguishable collection is obviously statistically distinguishable as well. Second, statistical indistinguishability is an equivalence relation. For all practical purposes, any statistically indistinguishable pair might as well be considered to be the same state; by defining states in this way, it follows that any collection of distinct states must be statistically distinguishable. Our final observation concerns states which are statistically, but not reliably, distinguishable. In this case, it follows that some measurements will necessarily alter the state: for if they did not, then any series of measurements that we would perform using the machine, can instead be performed on a lone object of the state in question.

From Bit to Qubit

Let’s return to looking at a closed system in our made-up universe. It evolves with continuous time. We want a finite maximum on the number of states which can be reliably distinguished from one another: for simplicity, let’s assume this maximum is two, though our arguments can be generalized. Suppose A and B are two such mutually distinguishable states, or eigenstates. Is it feasible for A and B be the only states taken by the system? In order to do anything interesting in continuous time, we should allow A to transition to B after a non-zero length of time. However, if this length of time were deterministic, say fixed to \(\Delta t\), then that would imply the existence of additional states. Indeed, let C be the system’s state after evolving A for a time \(\Delta t / 2\). Since C is prior to the transition, our A-B measurement would detect it as A; thus, C is distinct from B. On the other hand, C would be detected as B if we perform a “delayed measurement”, in which we wait an additional \(\Delta t / 2\) before applying our A-B measurement; thus, C is also distinct from A. We must conclude that C is a third state, different from A and B. In fact, by waiting for different amounts of time, we find an infinite continuum of intermediate states.

In a last deperate attempt to avoid adding extra states, we might allow the transition times to be random, like radioactive decay. However, if we’re allowing genuinely random processes into our theory, we might as well consider the “maybe-A maybe-B” situation to itself be a state: after all, distinct probabilistic mixtures of distinguishable states remain statistically distinguishable from one another. This formalism has the advantage that an outsider, not involved in the experiment, can model the evolution of our system deterministically: from their view, the state A simply evolves into “maybe”-states that contain increasing shares of B. When an experimenter measures the state, from their perspective it can be said that the state “collapses” to either A or B. The outsider, who doesn’t communicate with the experimenter, would then say that the experimenter became “entangled” with the system: together, they are jointly in a state of “maybe A, with the experimenter seeing A; or maybe B, with the experimenter seeing B”. Substituting A and B for the dead and alive states of Schrödinger’s cat, the resemblance becomes clear! The entangled experimenter, having observed the system, is resigned to either the A branch or the B branch. For the unentangled outsider, on the other hand, neither branch has “materialized”1.

Recall that A and B are a maximal set of reliably distinguishable states for our system. Having accepted that our theory must support additional states that are only statistically distinguishable, we can consider alternative formulations, aside from the probabilistic mixtures that we’ve just discussed. Indeed, the probabilistic theory has some shortcomings. For instance, it’s not clear how to make nice reversible laws that transition from A to B. Upon reaching B, such a law should begin to transition back to A; however, how would a “maybe-A maybe-B” state know whether it’s currently on the “forward swing” from A to B, or on the “return swing” back to A? For reasons such as this, it becomes convenient to use a complex number-valued variant of probability theory, in which, rather than swinging linearly from A to B, the states are arranged on a sphere, transitioning along its geodesics. I’ll defer to Hardy for the rigorous argument. The upshot is that complex numbers have phases and amplitudes, which allow the “random” outcomes to interfere with one another, constructively and destructively, much like vibrating strings. Quantum weirdness ensues.

Waves

We’ve sketched out quantum mechanics for a two-eigenstate system, or qubit. While a classical computer bit has clear-cut 0 and 1 states, we saw that a qubit can take on a variety of “in-between” states, which can be conceptualized on a sphere2. A real-life example of a qubit is the spin of an electron. But what about the position of an electron, or of anything for that matter? We still want our continuous spatial geometry, while somehow bounding the number of distinguishable states!

Here, nature has a trick up its sleeve: the position and momentum are Fourier transforms of one another. Since Fourier transforms are also relevant to how we produce and perceive sound, we can illustrate by analogy: if you think of position as being spread out in a wave like a plucked guitar string, then the momentum would be spread out like the frequency spectrum that characterizes the pitch and timbre of its sound. Notice that a string cannot simultaneously have both a precise frequency and a precise position of displacement: a pure tone displaces the entire string, whereas a pure point displacement lacks a frequency. In the same way, physical objects cannot have simultaneously a pure position and a pure momentum3; even attaining a pure position requires infinite energy. This is the Heisenberg uncertainty principle.

Unfortunately, I can’t sketch this out more convincingly without diving into the math4. Nonetheless, at its core, the theory is the same as in the simpler qubit system. Instead of A and B, the eigenstates now are the component sine waves that combine together to make a wave packet.

Conclusions

There is a great irony in the argument we’ve laid out. Because quantum mechanics appears so mysterious, it has become fashionable to speculate that it may hold the key to more powerful forms of computation, intelligence, and even consciousness. There do exist computational problems for which the fastest known algorithms are quantum, but that’s only if we presuppose a discrete model of computation, corresponding to the regime of our universe in which quantum states decohere. Our discussion suggests, in fact, that the true “purpose” of quantum mechanics may be directly antithetical to these popular interpretations: it serves not to increase our power, but to constrain it! From the perspective of our universe-building exercise, quantum mechanics offers the best of both worlds: the symmetries of a continuous universe, with the informational constraints of a discrete one.

Please note that, unlike the paper on which they’re based, my arguments here are not at all rigorous. Nonetheless, I hope they may provide some intuition into the mysterious nature of quantum mechanics, without demanding as much technical depth. Thanks to Sid Jain for pointing me to the paper!

-

This distinction is unimportant in classical probability theory, where the branches add up independently. However, in quantum theory, an outsider may yet have the branches interfere. ↩

-

I wonder… could this help explain why space has three dimensions? A competing justification is that it takes exactly three dimensions to embed all graphs. ↩

-

In everyday life, when you don’t need too much precision, objects may appear to have definite positions and momenta. Similarly, musical composers may notate definite pitches occurring at definite times. However, if you could analyze just a microsecond from a live recording, you won’t easily decipher the pitches playing at that instant. ↩

-

Would anyone like to demonstrate using animations, perhaps? ↩